Efficient Karpenter Autoscaling for K8s Clusters

Learn how Karpenter autoscaling improves Kubernetes cluster efficiency, reduces costs, and simplifies node management for dynamic cloud workloads.

Managing cloud costs is a primary challenge for any team running Kubernetes at scale. Traditional autoscalers often lead to significant waste through overprovisioning—launching large, expensive nodes to accommodate small workloads because they are constrained by predefined node groups. This is where Karpenter autoscaling provides a decisive advantage. By analyzing the precise resource needs of individual pods, Karpenter provisions perfectly sized instances just-in-time, drastically reducing idle capacity and lowering your cloud bill. This article details how Karpenter’s dynamic provisioning model works, how it optimizes costs without sacrificing performance, and the best practices for configuring it to maximize savings.

Unified Cloud Orchestration for Kubernetes

Manage Kubernetes at scale through a single, enterprise-ready platform.

Key takeaways:

- Provision nodes based on workload needs: Karpenter bypasses traditional node groups to launch compute resources tailored to the exact requirements of unschedulable pods. This pod-driven approach leads to faster scaling, reduced resource waste, and significant cost savings.

- Define precise configurations for production: Karpenter's effectiveness depends on accurate inputs. Ensure all production pods have well-defined resource requests and limits, and configure node lifecycle policies like consolidation and expiration to maintain cluster health and prevent cost overruns.

- Manage fleet-wide policies with GitOps: To maintain consistency across multiple clusters, manage your Karpenter configurations as code. A platform like Plural uses a GitOps workflow to enforce standardized

NodePooland security policies, providing a single source of truth for your entire autoscaling infrastructure.

What Is Karpenter and How It Works

Karpenter is an open-source autoscaler built by AWS that replaces the rigid, node-group–driven model used by the standard Kubernetes Cluster Autoscaler. Instead of scaling predefined groups, it talks directly to your cloud provider’s compute APIs (such as EC2) and provisions nodes based on the real scheduling requirements of unschedulable pods.

This pod-level visibility gives Karpenter far more flexibility. It can choose from any available instance type, size, or purchasing option and launch exactly the compute a workload needs—general purpose, GPU, memory-optimized, or otherwise—without requiring separate node groups for each category. The result is faster provisioning, improved bin-packing, and substantially less unused capacity.

Karpenter runs as a controller inside your cluster, watching for unschedulable pods and responding immediately. When scheduling pressure appears, it launches nodes individually and just-in-time, simplifying both your autoscaling strategy and your node group architecture.

Architecture: NodePools and NodeClasses

Karpenter relies on CRDs to model its configuration, with two primary resources you’ll work with:

NodePools define the high-level scheduling rules for provisioned nodes—allowed instance types, zones, taints, startup taints, and any constraints you want for grouping workloads. Multiple NodePools let you enforce different placement and scheduling policies without managing separate autoscaling groups.

NodeClasses encapsulate cloud-provider details such as AMI families, security groups, and IAM instance profiles. By separating provider configuration from scheduling logic, you can adjust infrastructure settings independently of workload-level rules.

This separation keeps Karpenter’s configuration clean and composable, which becomes especially important in large multi-team or multi-environment clusters.

Node Provisioning Workflow

The provisioning cycle begins as soon as the Kubernetes scheduler marks a pod as unschedulable. Karpenter reacts immediately:

- It reads the pod’s resource requests, selectors, affinities, tolerations, and other scheduling constraints.

- It groups pending pods with compatible requirements to optimize node packing.

- Using these aggregated constraints, it selects the lowest-cost instance type that satisfies them.

- It launches a new node through the cloud provider API and waits for it to become Ready.

- Once the node joins the cluster, the scheduler places the pending pods onto it.

This workflow completes within seconds, minimizing pending time and ensuring workloads scale smoothly under load.

Real-Time, Workload-Aware Decision Making

Karpenter is event-driven. Rather than polling, it continuously watches the Kubernetes API for pods the scheduler can’t place. When one appears, it immediately evaluates the pod’s specification to understand exactly what resources, topology, and hardware features are required.

Because it is not bound to predefined node groups, Karpenter can pick from any instance type that meets those constraints—whether that means a GPU node in a specific availability zone or a small burstable instance for a lightweight job. This design prevents both overprovisioning and resource starvation and ensures your cluster scales based on real workload needs, not static assumptions.

Platforms like Plural build on these capabilities by offering centralized governance, multi-cluster visibility, and GitOps-driven configuration, making it easier to adopt Karpenter consistently across large environments.

How Karpenter Differs from Traditional Kubernetes Autoscaling

Karpenter represents a major redesign of Kubernetes autoscaling. While the Cluster Autoscaler (CAS) has been the default for years, it inherits structural limitations: it scales fixed node groups, reacts slowly, and often provisions more compute than necessary. Karpenter removes these constraints by treating each node as an individually optimized resource, created directly from pod requirements rather than from predeclared capacity pools. This architectural shift enables faster, more accurate, and more cost-efficient scaling that aligns with the elasticity expected in cloud-native workloads.

The Core Limitations of Cluster Autoscaler

CAS monitors for unschedulable pods and increments the size of a cloud Auto Scaling Group to make room. This design forces you to define node groups up front—typically locked to a single or small set of instance types. In practice, this leads to:

- Rigid capacity definitions that can't adapt to workload variation

- Large nodes being added to host small pods

- Multi-minute delays while an ASG reacts and nodes join the cluster

Because CAS manages groups instead of individual nodes, it simply can’t optimize instance selection or bin packing. The result is slower response times and persistent overprovisioning.

Karpenter’s Node-First, API-Driven Model

Karpenter bypasses Auto Scaling Groups entirely. It watches for unschedulable pods, inspects their resources and placement constraints, and provisions the most suitable instance directly through the cloud provider’s compute API. This includes selecting the right processor architecture, instance size, hardware accelerators, or availability zone. Nodes become workload-specific rather than workload-agnostic, which eliminates the need for sprawling node group definitions.

By analyzing pod requirements in real time, Karpenter makes per-node decisions that traditional autoscalers cannot. Each launch is effectively a custom fit for the workload.

Speed and Cost Advantages

Karpenter’s architecture provides two immediate benefits:

Speed:

Provisioning happens in seconds because it doesn’t wait on ASG reconciliation loops. Pods move from pending to running much faster, which improves application responsiveness under load spikes.

Cost Efficiency:

Karpenter’s fine-grained provisioning eliminates the habitual overprovisioning that comes from static node groups. It can select from the full catalog of instance types—including Spot capacity—to minimize cost while meeting exact workload requirements. This flexibility leads to meaningful and repeatable savings, especially in heterogeneous environments.

Platforms like Plural extend these advantages by adding governance, observability, and GitOps automation, allowing teams to standardize and scale Karpenter usage across many clusters.

How Karpenter Makes Scaling Decisions

Karpenter scales clusters by focusing on the real-time needs of individual unscheduled pods. Instead of reacting within the boundaries of a predefined node group, it provisions compute directly through the cloud provider’s API. This makes scaling both faster and more precise. Nodes are created only when needed and are sized specifically for the pods that triggered the scaling event. The result is infrastructure that adapts to workload demand rather than relying on static assumptions.

Analyzing Pod Scheduling Requirements

When the Kubernetes scheduler marks a pod as Pending, Karpenter immediately evaluates its full scheduling spec. It reads CPU and memory requests, node selectors, affinities, tolerations, and topology constraints to determine exactly what kind of node is required. This level of insight lets Karpenter provision nodes that match the workload’s characteristics—down to instance family, size, zone, or required hardware devices. Because it interprets pod constraints directly, it can resolve scheduling gaps without introducing unnecessary capacity.

Resource Optimization Through Continuous Rebalancing

Karpenter doesn’t stop at provisioning nodes. It continuously analyzes the cluster for inefficiencies.

- Consolidation: Identifies underutilized or empty nodes, drains them safely, and bins their pods onto fewer nodes.

- Deprovisioning: Terminates nodes that remain unused beyond a threshold to eliminate idle spend.

These operations shrink your cluster footprint and reduce costs, all while preserving workload availability.

Instance Selection and Bin Packing

Once Karpenter aggregates the requirements of pending pods, it computes the minimal set of nodes required and chooses the most cost-efficient instance types. NodePools define the allowable families and purchasing options—Spot, On-Demand, or a mix—but Karpenter is free to select any instance within those constraints.

Karpenter uses bin-packing to place multiple compatible pods onto the smallest number of nodes. It then queries the cloud provider for pricing and availability and launches the cheapest instances that satisfy all constraints.

This dynamic instance selection delivers compute that is both right-sized and cost optimized—far beyond what static node groups can provide. Platforms like Plural enhance this by giving teams governance, consistency, and GitOps workflows for managing Karpenter configurations across large fleets.

Key Benefits of Using Karpenter for Autoscaling

Karpenter accelerates scaling by reacting directly to unscheduled pods and provisioning nodes through the cloud provider’s API. It bypasses the multi-step workflow of node-group–based autoscalers, delivering capacity within seconds. Because Karpenter evaluates each pod’s constraints—selectors, affinities, resources—it launches nodes that are an exact match for the workload rather than generic capacity pulled from a predefined group. This speed is especially valuable for spiky traffic patterns, rapid deployments, or latency-sensitive applications where slow scale-ups impact user experience.

Cost Optimization Through Dynamic Provisioning

Karpenter reduces cloud spend by eliminating the structural overprovisioning that comes from static node groups. Nodes are launched only when needed, using the minimal instance size that satisfies the aggregated resource demands of pending pods, and terminated as soon as they become unnecessary. This just-in-time model ensures you only pay for the compute you actually consume. Karpenter can also prioritize Spot capacity or choose from a wide range of instance families, automatically selecting the cheapest option that satisfies your constraints. This flexibility produces predictable and sustained cost savings.

Simplified Cluster Operations

Traditional autoscalers force teams to manage multiple node groups to support different instance types, architectures, or capacity classes. This increases configuration overhead and introduces opportunities for drift. Karpenter replaces this model with a single, flexible NodePool definition where you declare high-level constraints—CPU architecture, allowed families, zone preferences, capacity type—and let the controller manage the details. This removes a large portion of node lifecycle maintenance and lets teams focus on application behavior rather than infrastructure plumbing.

Broad Instance Selection and Better Bin Packing

Because Karpenter is not tied to node groups, it can choose freely from the entire catalog of instance types offered by your cloud provider. When pods are pending, Karpenter evaluates their combined requirements and selects the most efficient instances for bin packing. Memory-heavy jobs can land on memory-optimized instances, batch workloads can run on Spot, and ML workloads can get GPU-enabled nodes—all from the same NodePool configuration. This workload-aware provisioning improves utilization and drives additional cost efficiency.

Platforms like Plural complement these benefits by adding governance, insights, and GitOps workflows, enabling consistent Karpenter adoption across large multi-cluster environments.

Configure Karpenter for Production

Running Karpenter in production requires more than a default install. Production clusters demand predictable provisioning, strict security, cost controls, and consistent behavior across environments. The best approach is to manage all Karpenter resources declaratively—NodePools, NodeClasses, constraints, and lifecycle policies—just as you would manage your application code.

Platforms like Plural strengthen this workflow by enforcing GitOps. Karpenter definitions live in version-controlled repositories, changes are auditable, and updates flow consistently across staging and production. This eliminates drift and ensures every cluster follows the same operational patterns.

Configure NodePools for Specialized Workloads

NodePools define the scheduling and provisioning constraints for nodes Karpenter creates. In production, avoid relying on a single, catch-all NodePool. Instead, use multiple specialized pools aligned with workload classes—general-purpose web services, GPU-based ML jobs, memory-heavy batch tasks, or even team-specific pools for isolation and cost reporting.

Specialized NodePools give you tighter control over instance families, architectures, pricing models, and scheduling rules. They also prevent expensive resources (like GPU instances) from being used by pods that don’t need them.

Set Up the EC2NodeClass for Reliable Infrastructure

The EC2NodeClass determines how Karpenter configures the underlying EC2 instances. This is where you enforce network, security, and IAM standards. In production, always:

- Launch nodes into private subnets

- Attach least-privilege security groups

- Specify the correct IAM instance profile

- Control EBS, networking, and user data settings

By linking each NodePool to a specific EC2NodeClass, you ensure that all provisioned nodes inherit consistent infrastructure and security posture without manual intervention.

Apply Resource Limits and Lifecycle Constraints

Karpenter’s lifecycle settings help control cost and maintain cluster hygiene:

- ttlSecondsAfterEmpty terminates empty nodes after a grace period

- ttlSecondsUntilExpired enforces regular node rotation for security patching

These policies prevent idle-cost buildup and ensure nodes get recycled on a controlled schedule.

Accurate CPU and memory requests in your pod specs are equally important. Karpenter uses these values for bin packing and instance selection. Without reliable requests, it cannot right-size nodes, which leads to higher cloud bills and less predictable scheduling.

Pin AMIs and Enforce Security Standards

Production demands deterministic behavior, so avoid “latest” AMI lookups. Always pin a specific, hardened AMI version in your EC2NodeClass. Your security team should maintain a regularly scanned, approved base image.

Pinning AMIs ensures new nodes won’t introduce untested updates or regressions. You can also use NodePool constraints to exclude expensive or non-compliant instance families, giving you stronger control over both security and spend.

Platforms like Plural make it easier to apply these configurations consistently across many clusters, ensuring your production environments remain secure, cost-efficient, and predictable.

Overcome Common Karpenter Implementation Challenges

Karpenter streamlines autoscaling, but it does not automatically optimize cost or performance without accurate workload data. It provisions nodes based strictly on pod resource requests, tolerations, affinities, and other constraints. If applications over-request CPU or memory, Karpenter will dutifully launch oversized instances. Real efficiency comes from pairing Karpenter’s provisioning logic with well-tuned application manifests.

Platforms like Plural help here by enforcing standardized resource configurations through GitOps. With consistent, version-controlled manifests, Karpenter always has reliable inputs for right-sizing decisions.

Achieve Multi-AZ Distribution Through Scheduling Rules

Karpenter does not distribute workloads across Availability Zones by itself. It simply acts on the scheduling constraints of pending pods. To achieve strong multi-AZ placement in production, define topologySpreadConstraints or explicit node affinity rules in your workloads. For example, spreading replicas across topology.kubernetes.io/zone ensures that Karpenter provisions nodes in each relevant zone as needed.

Plural enables you to define these policies once and propagate them across all applications and clusters, ensuring consistent high-availability behavior.

Align Karpenter with Reserved Instances

Karpenter's on-demand, dynamic provisioning can unintentionally bypass Reserved Instances or Savings Plans. Because it selects from any instance type allowed by the NodePool, it may launch on-demand instances even when unused reserved capacity exists.

To address this, create dedicated NodePools targeting instance types covered by your RIs. Guide critical or stateful workloads to that pool using node selectors or affinities, while allowing burst workloads to run on separate Spot or on-demand pools. This setup maximizes RI utilization without undermining Karpenter’s flexibility.

Handle Spot Interruptions Gracefully

Spot Instances offer substantial cost savings, but the risk of interruption must be managed. Karpenter integrates with the EC2 interruption notice system, automatically cordoning and draining affected nodes before termination. It then provisions replacement capacity based on the pending pods’ requirements.

To make this reliable, configure interruption handling in your EC2NodeClass and ensure all Spot-capable NodePools reference it. Plural’s centralized configuration model helps maintain consistent Spot policies across your entire fleet.

By understanding these operational nuances and applying structured configuration practices, teams can deploy Karpenter at scale with confidence—achieving high availability, predictable costs, and strong workload resilience.

Monitor and Optimize Karpenter Performance

Installing Karpenter is only the first step. To keep scaling fast, predictable, and cost-efficient, you need ongoing visibility into how it provisions nodes and responds to scheduling pressure. Monitoring lets you verify that Karpenter’s decisions match your expectations, detect inefficiencies before they inflate cloud costs, and fine-tune NodePools and NodeClasses as workloads evolve.

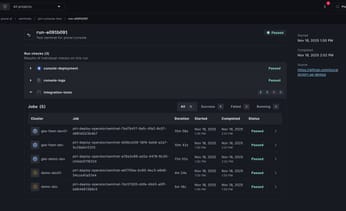

For teams running multiple clusters, a centralized view—such as Plural’s multi-cluster dashboard—simplifies validation and troubleshooting by consolidating Karpenter insights across your entire fleet.

Track Key Metrics and Indicators

Karpenter exposes detailed Prometheus metrics that reveal its provisioning behavior and lifecycle decisions. A few essential metrics to monitor include:

- karpenter_nodes_created – how often new nodes are being launched

- karpenter_deprovisioning_actions_performed – frequency of consolidation or node removal

- karpenter_pods_startup_time_seconds – time from pod scheduling to readiness

These metrics establish a baseline for your cluster’s normal scaling patterns. Spikes in node creation, a sudden slowdown in pod startup times, or unexpected deprovisioning activity often signal misconfigurations, API bottlenecks, or capacity constraints.

Monitor Provisioning and Scheduling Outcomes

Karpenter’s primary goal is to schedule pods quickly. If pods sit Pending for longer than expected, review Kubernetes events to understand why. Common causes include:

- Instance type shortages in a specific zone

- Incorrect NodePool constraints

- Restrictive affinity/anti-affinity rules

- IAM or subnet misconfigurations in the NodeClass

With Plural’s unified UI, you can observe pod states and events across all clusters without juggling multiple kubeconfigs, making it easier to identify patterns and diagnose configuration issues.

Analyze Resource Allocation and Utilization

Even with Karpenter’s right-sizing capabilities, ongoing utilization analysis is essential. Review CPU and memory usage on provisioned nodes to validate that workloads are being packed efficiently:

- Underutilized nodes may indicate inflated pod resource requests or overly broad NodePool constraints.

- Overloaded nodes suggest the need for adjusted requests, limits, or instance-family constraints.

Striking the right balance ensures your workloads run smoothly while maximizing cost efficiency.

Integrate Karpenter Into Your Observability Stack

Since Karpenter provides Prometheus-formatted metrics, integrating it into existing monitoring pipelines is straightforward. Scrape its metrics endpoint with Prometheus and use Grafana to create dashboards that visualize:

- Node provisioning patterns

- Pod startup performance

- Consolidation and deprovisioning activity

- Instance type distribution

- Zone-specific scaling behavior

Plural streamlines this by providing a ready-to-deploy observability stack with preconfigured dashboards for Karpenter and other critical components. This reduces the operational overhead of building observability from scratch and ensures teams have immediate, actionable insights into how autoscaling is performing.

By actively monitoring performance and refining configuration as workloads change, you ensure Karpenter delivers maximum speed, efficiency, and cost savings in production.

Apply Best Practices for Karpenter in Production

Running Karpenter in production requires configurations that prioritize stability, cost governance, and predictable scaling. While Karpenter automates node provisioning, its behavior is only as accurate as the configurations and workload definitions it receives. Production-ready setups depend on consistently applied policies—resource requests, NodePool constraints, lifecycle rules, and cost guardrails.

In multi-cluster environments, maintaining this consistency is often the hardest part. A GitOps-driven workflow with a platform like Plural ensures that Karpenter policies are declared once, versioned, and enforced everywhere, preventing drift and ensuring every cluster adheres to the same autoscaling, security, and cost-management standards.

Set Accurate Resource Requests and Limits

Karpenter’s provisioning logic relies entirely on pod-level resource requests. If workloads omit or inflate CPU and memory requests, the autoscaler will either overprovision costly instances or fail to meet actual application needs.

Best practices for production workloads include:

- Defining CPU and memory requests for every deployment

- Setting limits to prevent noisy-neighbor issues

- Enforcing resource policies using admission controllers or GitOps rules

Accurate requests enable Karpenter to right-size nodes and maximize bin packing. As seen in real-world deployments, teams that standardize resource definitions dramatically reduce overprovisioning and improve cost efficiency.

Manage Node Lifecycle for Stability and Cost Savings

Node lifecycle settings let you keep clusters healthy and cost-optimized:

- Consolidation: Actively replaces inefficient nodes by repacking pods and terminating underutilized capacity.

- ttlSecondsUntilExpired: Forces periodic node rotation, which helps apply security patches and mitigates issues like memory leaks or long-lived configuration drift.

These features ensure nodes remain fresh, workloads stay balanced, and your cluster continuously converges toward an optimized state.

Implement Cost Controls and Billing Guardrails

Even with right-sized nodes, dynamic autoscaling can lead to unexpected costs if workloads spike or constraints are misconfigured. Production environments should combine cloud-native billing protections with Karpenter-level limits:

- Configure billing alarms in your cloud provider to catch cost spikes early

- Use NodePool spec.limits to cap maximum CPU or memory across the autoscaler

- Separate Spot and On-Demand NodePools to control how workloads consume different pricing models

These controls establish both soft alerts and hard ceilings, giving you predictable spend even under heavy load.

Use Dedicated Controller Placement

The Karpenter controller must remain available for autoscaling to function. Running it on Karpenter-managed nodes risks a circular dependency where the controller terminates its own host node.

Production deployments should:

- Run the controller on a self-managed node group, or

- Use a serverless option like AWS Fargate

This separation prevents accidental deprovisioning and ensures that scaling operations continue uninterrupted during consolidations, terminations, or node churn.

By applying these best practices—and managing them centrally using a tool like Plural—you establish a scalable, reliable framework for running Karpenter in demanding production environments.

Use Advanced Karpenter Features for Optimization

Once Karpenter is reliably provisioning nodes, you can unlock its more advanced capabilities to refine cost efficiency, strengthen workload isolation, and improve resilience. These features let you shape how nodes are created, how workloads are placed, and how disruptions are handled during scaling or lifecycle events.

As clusters multiply, keeping these configurations aligned becomes complex. A GitOps-based platform like Plural helps maintain consistency by defining all advanced Karpenter policies—taints, interruption handling, PDB rules, and lifecycle settings—as code. This ensures every cluster uses the same hardened configuration and eliminates the risk of misaligned or outdated definitions.

Match Custom Resources With Taints

Taints allow you to reserve specific nodes for exclusive classes of workloads, making them ideal for specialized hardware such as GPU- or high-memory nodes. By assigning taints to a NodePool, you ensure that only pods with the corresponding tolerations can run on those nodes.

Karpenter takes these scheduling rules into account when provisioning new capacity. If a pending pod has a matching toleration, Karpenter creates a node with the required taints. This guarantees that specialized resources remain dedicated to the applications that truly need them and prevents accidental or wasteful usage by general-purpose workloads.

Configure Interruption Handling

Spot Instances are one of the most effective ways to lower compute costs, and Karpenter’s native interruption handling makes them practical for more workloads. Karpenter integrates with AWS’s interruption notice queue and reacts as soon as a Spot Instance receives a two-minute termination warning. It:

- Taints the node to stop new pod scheduling

- Drains the running pods safely

- Provisions replacement capacity immediately

This automatic, graceful handoff minimizes disruption and lets you safely incorporate Spot capacity into production workloads that can tolerate brief interruptions. Using Plural ensures these configurations—especially node class settings—stay consistent across all clusters.

Use Pod Disruption Budgets and Node Tagging

For voluntary disruptions like consolidation or node expiration, Pod Disruption Budgets (PDBs) are essential. A PDB defines the minimum number of replicas that must remain healthy. When Karpenter attempts to deprovision a node, it checks PDBs before draining. If removing the node would violate a PDB, Karpenter waits until enough replicas are running elsewhere.

Combined with node tagging, you can:

- Control how workloads move during scaling

- Enforce cost-allocation policies

- Ensure high availability during consolidations, upgrades, and rotations

This gives you granular control over both availability and organizational structure during scaling events.

Integrate With Existing Autoscaling Tools

While Karpenter can replace the Cluster Autoscaler entirely, some teams benefit from a hybrid strategy. A common pattern is:

- Use the Cluster Autoscaler for baseline capacity backed by Reserved Instances

- Use Karpenter for burstable, variable workloads using Spot or On-Demand nodes

The key is to isolate node management responsibilities. Distinct NodePools or node groups prevent the two autoscalers from managing the same resources and causing conflicts. This hybrid approach preserves predictable cost savings from RIs while giving you Karpenter’s speed and flexibility for dynamic workloads.

Using these advanced features—and managing them consistently with Plural—lets you build a resilient, cost-optimized, production-grade autoscaling environment tailored to your workloads.

Manage Karpenter at Scale with Plural

Karpenter makes autoscaling far more efficient at the cluster level, but managing it across dozens or hundreds of clusters introduces an entirely different set of challenges. Each cluster needs consistent NodePool definitions, secure NodeClass configurations, lifecycle policies, and monitoring. Without centralized governance, differences accumulate, leading to configuration drift, inconsistent scaling behavior, and gaps in security or cost controls.

Plural solves this by turning Karpenter into a fleet-level capability rather than a per-cluster component. Using a GitOps-driven model, Plural enforces autoscaling standards, keeps configurations aligned, and gives platform teams a single place to observe Karpenter’s performance across the entire organization.

Define Fleet-Wide Autoscaling Policies

Karpenter’s power comes from its declarative configurations—NodePools, NodeClasses, taints, labels, instance-type constraints, and lifecycle settings. Managing these by hand across many clusters is error-prone and hard to audit.

Plural centralizes these policies using Git:

- Define standardized Karpenter configurations once

- Apply them automatically to every cluster through Global Services

- Version changes, review them via pull requests, and roll them out predictably

This approach guarantees every cluster shares the same autoscaling posture. Any update—adding Spot instance families, adjusting constraints, or tuning lifecycle settings—is automatically deployed everywhere without manual sync work or drift.

Gain Centralized Monitoring and Control

Even when each cluster exposes Karpenter’s Prometheus metrics, aggregating them across a fleet is nontrivial. Without unified visibility, it’s hard to detect systemic issues such as:

- Inefficient provisioning patterns

- Low pod scheduling throughput

- Repeated node churn

- Cost anomalies

Plural addresses this by shipping a federated observability stack and a multi-cluster dashboard. It collects and visualizes key Karpenter indicators—node utilization, pod startup latency, provisioning frequency, interruption handling—across every cluster. Platform teams get a consolidated view of autoscaling behavior without switching kubeconfig contexts or managing bespoke metric pipelines.

Drive Configuration with GitOps

Fleet-scale reliability depends on treating configuration as code, and Plural enforces this approach end-to-end. Every change to a Karpenter NodePool, NodeClass, or lifecycle policy flows through Git:

- Updates are proposed via pull requests

- Merges become the canonical source of truth

- Plural’s operators roll out changes automatically to the designated clusters

This eliminates ad-hoc kubectl changes, ensures perfect reproducibility, and provides full auditability. When something goes wrong, you can review exactly what changed and when—critical for debugging autoscaling behavior at scale.

By managing Karpenter with Plural, organizations gain the consistency, visibility, and governance needed to run efficient, production-grade autoscaling across their entire Kubernetes fleet.

Related Articles

Unified Cloud Orchestration for Kubernetes

Manage Kubernetes at scale through a single, enterprise-ready platform.

Frequently Asked Questions

Can I use Karpenter for my stateful applications, or is it only for stateless ones? Karpenter is workload-agnostic, meaning it works just as well for stateful applications as it does for stateless ones. It makes provisioning decisions based on the scheduling requirements of any pending pod. For stateful workloads, this includes respecting constraints like PersistentVolume topology, anti-affinity rules to spread pods across nodes, and specific node selectors. As long as your StatefulSet pods are configured with the correct scheduling directives, Karpenter will provision nodes that meet those exact requirements, ensuring your stateful applications run correctly.

If Karpenter is so efficient, why are my cloud costs still high? Karpenter's efficiency is directly tied to the information it receives from your pods. The most common reason for high costs is inaccurate or missing resource requests in your application manifests. If a pod requests far more CPU or memory than it actually needs, Karpenter will provision a larger, more expensive node to match that request, leading to waste. True cost optimization requires both Karpenter's intelligent provisioning and well-defined resource requests from your applications.

How does Karpenter handle high availability across different availability zones? Karpenter does not automatically distribute workloads across availability zones on its own. Instead, it respects the standard Kubernetes scheduling constraints you define for your applications. To achieve high availability, you should configure your deployments with topologySpreadConstraints that instruct the scheduler to spread pods across different zones. When Karpenter sees pending pods with these constraints, it will provision new nodes in the required zones to satisfy them, ensuring your application remains resilient.

What's the most common reason pods get stuck in a "Pending" state with Karpenter? While there can be several causes, a frequent issue is a mismatch between a pod's scheduling requirements and the constraints defined in your Karpenter NodePool. For example, your pod might request a GPU instance, but your NodePool may not be configured to allow provisioning of GPU instance types. Another common cause is requesting an instance type that is simply unavailable in your specified region or availability zone. Reviewing Karpenter's logs and Kubernetes events will typically point you to the specific constraint that could not be satisfied.

How can I apply a consistent Karpenter configuration to all my clusters without manual work? Managing configurations like NodePools and EC2NodeClasses across a fleet of clusters is a significant challenge that manual processes can't solve reliably. The best approach is to use a GitOps workflow. With a platform like Plural, you can define your Karpenter configurations as code in a central Git repository. Plural's continuous deployment engine then automatically applies these configurations to all your target clusters, ensuring every cluster runs the exact same, version-controlled setup. This eliminates configuration drift and provides a clear audit trail for every change.

Newsletter

Join the newsletter to receive the latest updates in your inbox.