Self-Hosted LLM: A Practical Guide for DevOps

Learn the essentials of self hosted LLMs, including benefits, key considerations, and tools to effectively manage your own large language models.

In today's data-driven world, large language models (LLMs) have become essential tools for businesses seeking to innovate and improve efficiency. But with the increasing use of LLMs comes the growing need for robust, secure, and cost-effective hosting solutions. Self-hosted LLMs offer a compelling alternative to cloud-based providers, giving organizations greater control over their data and infrastructure. This post provides a comprehensive guide to self-hosted LLMs, exploring the key benefits, addressing the challenges, and offering practical steps for successful implementation. We'll examine popular self-hosted LLM solutions like OpenLLM, Ray Serve, and Hugging Face TGI, and showcase how platforms like Plural simplify the deployment and management of these complex systems on Kubernetes. Whether you're prioritizing data privacy, seeking cost optimization, or aiming for maximum customization, this guide will equip you with the knowledge to make informed decisions about self-hosted LLMs.

Since its arrival in November 2022, ChatGPT has revolutionized the way we all work by leveraging generative artificial intelligence (AI) to streamline tasks, produce content, and provide swift and error-free recommendations. By harnessing the power of this groundbreaking technology, companies and individuals can amplify efficiency and precision while reducing reliance on human intervention.

At the core of ChatGPT and other AI algorithms lie Large Language Models (LLMs), renowned for their remarkable capacity to generate human-like written content. One prominent application of LLMs is in the realm of website chatbots utilized by companies.

By feeding customer and product data into LLMs and continually refining the training, these chatbots can deliver instantaneous responses, personalized recommendations, and unfettered access to information. Furthermore, their round-the-clock availability empowers websites to provide continuous customer support and engagement, unencumbered by constraints of staff availability.

While LLMs are undeniably beneficial for organizations, enabling them to operate more efficiently, there is also a significant concern regarding the utilization of cloud-based services like OpenAI and ChatGPT for LLMs. With sensitive data being entrusted to these cloud-based platforms, companies can potentially lose control over their data security.

Simply put, they relinquish ownership of their data. In these privacy-conscious times, companies in regulated industries are expected to adhere to the highest standards when it comes to handling customer data and other sensitive information.

In heavily regulated industries like healthcare and finance, companies need to have the ability to self-host some open-source LLM models to regain control of their own privacy. Here is what you need to know about self-hosting LLMs and how you can easily do so with Plural.

Should You Self-Host Your LLM?

In the past year, the discussion surrounding LLMs has evolved, transitioning from "Should we utilize LLMs?" to "Should we opt for a self-hosted solution or rely on a proprietary off-the-shelf alternative?"

Like many engineering questions, the answer to this one is not straightforward. While we are strong proponents of self-hosting infrastructure – we even self-host our AI chatbot for compliance reasons – we also rely on our Plural platform, leveraging the expertise of our team, to ensure our solution is top-notch.

We often urge our customers to answer these questions below before self-hosting LLMs.

- Where would you want to host LLMs?

- Do you have a client-server architecture in mind? Or, something with edge devices, such as on your phone?

It also depends on your use case:

- What will the LLMs be used for in your organization?

- Do you work in a regulated industry and need to own your proprietary data?

- Does it need to be in your product in a short period?

- Do you have engineering resources and expertise available to build a solution from scratch?

If you require compliance as a crucial feature for your LLM and have the necessary engineering expertise to self-host, you'll find an abundance of tools and frameworks available. By combining these various components, you can build your solution from the ground up, tailored to your specific needs.

If your aim is to quickly implement an off-the-shelf model for a RAG-LLM application, which only requires proprietary context, consider using a solution at a higher abstraction level such as OpenLLM, TGI, or vLLM.

Key Takeaways

- Prioritize data privacy and security with self-hosted LLMs. Keeping sensitive data within your own infrastructure offers greater control and compliance, especially critical for regulated industries.

- Control costs with self-hosted LLMs. While demanding initial investment and expertise, self-hosting eliminates recurring subscription fees, providing predictable long-term cost management for high-usage applications.

- Simplify self-hosting LLMs on Kubernetes with Plural. Leverage pre-built deployments for tools like Ray and Yatai, freeing your team to focus on application development rather than infrastructure management.

Key Considerations for Self-Hosting

Data Privacy and Security

Using cloud-based LLMs like ChatGPT often means companies lose control over their data. This is a major concern for businesses in regulated industries (healthcare, finance, etc.) that must protect sensitive customer information. Self-hosting LLMs offers greater security, privacy, and compliance. You maintain control of your data, ensuring it doesn't leave your infrastructure. This is paramount for adhering to industry regulations and maintaining customer trust. As we discussed in our previous post on self-hosting LLMs, data privacy is a primary driver for many organizations choosing this approach.

Cost Analysis

Cloud-based LLM providers offer convenience, but the costs can quickly escalate with high usage. Self-hosting lets you avoid recurring subscription fees and invest in infrastructure you own. This offers predictable, long-term cost management, especially beneficial for applications with consistent, heavy LLM usage. HatchWorks AI highlights how self-hosting offers better control over data, improves compliance, allows for easier customization and experimentation, and ultimately can lead to significant cost savings compared to cloud-based alternatives, especially for high-usage scenarios.

Infrastructure and Expertise

Self-hosting LLMs offers more control and customization than using managed APIs, but it also introduces more complexity. Zilliz's guide explains that the decision to self-host depends on balancing the need for control, customization, and long-term cost savings against the ease and speed of managed APIs. Before deciding to self-host, evaluate your existing IT infrastructure and the availability of skilled engineers to manage the complexities of a self-hosted LLM environment. This includes considering your current client-server architecture.

Why Self-Host an LLM?

Although there are various advantages to self-hosting LLMs, three key benefits stand out prominently.

- Greater security, privacy, and compliance: It is ultimately the main reason why companies often opt to self-host LLMs. If you were to look at OpenAI’s Terms of Use, it even mentions that “We may use Content from Services other than our API (“Non-API Content”) to help develop and improve our Services.

Anything you or your employees upload into ChatGPT will be included in future training data. And, despite its attempt to anonymize the data, it ultimately contributes knowledge of the model. Unsurprisingly, there is even a conversation happening in the space as to whether or not ChatGPT's use of data is even legal, but that’s a topic for a different day. What we do know is that many privacy-conscious companies have already begun to prohibit employees from using ChatGPT.

2. Customization: By self-hosting LLMs, you can scale alongside your use case. Organizations that rely heavily on LLMs might reach a point where it becomes economical to self-host. A telltale sign of this occurring is when you begin to hit rate limits with public API endpoints and the performance of these models is ultimately affected. Ideally, you can build it all yourself, train a model, and create a model server for your chosen ML framework/model runtime (e.g. tf, PyTorch, Jax.), but most likely you would leverage a distributed ML framework like Ray.

3. Avoid Vendor-Lock-In: When between open-source and proprietary solutions, a crucial question to address is your comfort with cloud vendor lock-in. Major machine learning services provide their own managed ML services, allowing you to host an LLM model server. However, migrating between them can be challenging, and depending on your specific use case, it may result in higher long-term expenses compared to open-source alternatives.

Popular Self-Hosted LLM Solutions

OpenLLM with Yatai

OpenLLM is specifically tailored for AI application developers who are tirelessly building production-ready applications using LLMs. It brings forth an extensive array of tools and functionalities to seamlessly fine-tune, serve, deploy, and monitor these models, streamlining the end-to-end deployment workflow for LLMs.

Key OpenLLM Features

- Serve LLMs over a RESTful API or gRPC with a single command. You can interact with the model using a Web UI, CLI, Python/JavaScript client, or any HTTP client of your choice.

- First-class support for LangChain, BentoML, and Hugging Face Agents

- E.g., tie a remote self-hosted OpenLLM into your langchain app

- Token streaming support

- Embedding endpoint support

- Quantization support

- You can fuse model-compatible existing pre-trained QLoRAa/LoRA adapters with the chosen LLM with the addition of a flag to the serve command, still experimental though:

https://github.com/bentoml/OpenLLM#️-fine-tuning-support-experimental

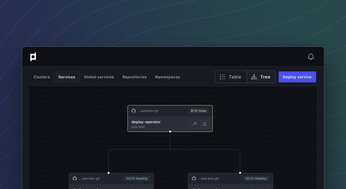

Self-Hosting Yatai on Plural

If you check out the official GitHub repo of OpenLLM you’ll see that the integration with BentoML makes it easy to run multiple LLMs in parallel across multiple GPUs/Nodes, or chain LLMs with other types of AI/ML models, and deploy the entire pipeline on BentoCloud https://l.bentoml.com/bento-cloud. However, you can achieve the same on a Plural-deployed Kubernetes via Yatai , which is essentially an open-source BentoCloud which should come at a much lower price point.

Ray Serve on a Ray Cluster

Ray Serve is a scalable model-serving library for building online inference APIs. Serve is framework-agnostic, so you can use a single toolkit to serve everything from deep learning models built with frameworks like PyTorch, TensorFlow, and Keras, to Scikit-Learn models, to arbitrary Python business logic. It has several features and performance optimizations for serving Large Language Models such as response streaming, dynamic request batching, multi-node/multi-GPU serving, etc.

Key Ray Serve Features

- It’s a huge well-documented ML Platform. In our opinion, it is the best-documented platform with loads of examples to work off of. However, you need to know what you’re doing when working with it, and it takes some time to get adapted.

- Not focused on LLMs, but there are many examples of how to OS LLMS from Hugging Face,

- Integrates nicely with Prometheus for cluster metrics and comes with a useful dashboard for you to monitor both servings and if you’re doing anything else on your ray cluster like data processing or model training, that can be monitored nicely.

- It’s what OpenAI uses to train and host their models, so it’s fair to say it is probably the most robust solution ready to handle production-ready use cases.

Self-Hosting Ray on Plural

Plural offers a fully functional Ray cluster on a Plural-deployed Kubernetes cluster where you can do anything you can do with Ray, from data-parallel data-crunching over distributed model training to serving off-the-shelf OS LLMs

Hugging Face TGI

A Rust, Python, and gRPC server for text generation inference. Used in production at HuggingFace to power Hugging Chat, the Inference API, and Inference Endpoint.

Key Hugging Face TGI Features

- Everything you need is containerized, so if you just want to run off-the-shelf HF models, this is probably one of the quickest ways to do it.

- They have no intent at the time of this writing to provide official Kubernetes support, citing

Self-Hosting Hugging Face LLMs on Plural

When you run an HF LLM model inference server via Text Generation Inference (TGI) on a Plural-deployed Kubernetes cluster you benefit from all the goodness of our built-in telemetry, monitoring, and integration with other marketplace apps to orchestrate it and host your data and vector stores. Here is a great example we recommend following along for deploying TGI on Kubernetes.

Building a LLM stack to self-host

When building an LLM stack, the first hurdle you'll encounter is finding the ideal stack that caters to your specific requirements. Given the multitude of available options, the decision-making process can be overwhelming. Once you've narrowed down your choices, creating and deploying a small application on a local host becomes a relatively straightforward task.

However, scaling said application presents an entirely separate challenge, which requires a certain level of expertise and time. For that, you’ll want to leverage some of the OS cloud-native platforms/tools we outlined above. It might make sense to use Ray in some cases as it gives you an end-to-end platform to process data, train, tune, and serve your ML applications beyond LLMs.

OpenLLM is more geared towards simplicity and operates at a higher abstraction level than Ray. If your end goal is to host a RAG LLM-app using langchain and/or llama-index, OpenLLM in conjunction with Yatai probably can get you there quickest. Keep in mind if you do end up going that route you’ll likely compromise on flexibility as opposed to Ray.

For a typical RAG LLM app, you want to set up a data stack alongside the model serving component where you orchestrate periodic or event-driven updates to your data as well as all the related data-mangling, creating embeddings, fine-tuning the models, etc.

The Plural marketplace offers various data stack apps that can perfectly suit your needs. Additionally, our marketplace provides document-store/retrieval optimized databases, such as Elastic or Weaviate, which can be used as vector databases. Furthermore, during operations, monitoring and telemetry play a crucial role. For instance, a Grafana dashboard for your self-hosted LLM app could prove to be immensely valuable.

If you choose to go a different route you can elect to use a proprietary managed service or SaaS solution (which doesn’t come without overhead either, as it would require additional domain-specific knowledge as well.) Operating and maintaining those platforms on Kubernetes is the main overhead you’ll have.

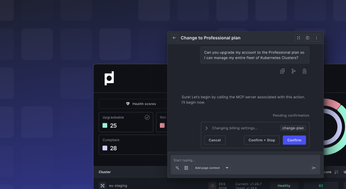

Plural to self-host LLMs

If you were to choose a solution like Plural you can focus on building your applications and not worry about the day-2 operations that come with maintaining those applications. If you are still debating between ML tooling, it could be beneficial to spin up an example architecture using Plural.

Our platform can bridge the gap between the “localhost” and “hello-world” examples in these frameworks to scalable production-ready apps because you don’t lose time on figuring out how to self-host model-hosting platforms like Ray and Yatai.

Plural is a solution that aims to provide a balance between self-hosting infrastructure applications within your own cloud account, seamless upgrades, and scaling.

To learn more about how Plural works and how we are helping organizations deploy secure and scalable machine learning infrastructure on Kubernetes, reach out to our team to schedule a demo.

If you would like to test out Plural, sign up for a free open-source account and get started today.

Hardware and Software Requirements for Self-Hosting LLMs

RAM and Storage

The RAM requirements for self-hosting an LLM depend heavily on the model's size. Smaller models, around 7 billion parameters, might run on a system with 16GB of RAM, but performance will be slow. Larger models (e.g., 40 billion parameters) often demand significantly more RAM, exceeding the capabilities of typical consumer hardware.

CPU and GPU

For optimal performance, use a powerful computer with a robust GPU. The parallel processing power of a good graphics card significantly benefits the demanding computations involved in running LLMs, leading to faster inference and training.

Software Dependencies

Several open-source tools simplify self-hosting LLMs. OpenLLM, Yatai, Ray Serve, and Hugging Face's TGI offer various features and complexities. The best tool for you depends on your specific needs and technical skills.

Choosing the Right LLM for Self-Hosting

Model Size and Performance

The number following an LLM model name (e.g., 7B, 13B, 40B) indicates the number of parameters. Higher numbers mean larger models, requiring more RAM and processing power. Choose a model that balances size with your hardware capabilities and desired performance.

Quantization for Resource Efficiency

Quantization reduces the size and RAM requirements of LLMs, making them viable on less powerful hardware. However, quantization can also decrease accuracy. Balance resource efficiency with performance when considering this technique.

Specific Models: Mistral, LLaMA 2, Dolphin, Serge, LocalAI

Many open-source LLMs are available for self-hosting. Community feedback indicates that models like Dolphin, while performant, are resource-intensive. LLaMA 2, for instance, has been reported as slow even on a system with 64GB RAM and a GTX 1050 Ti. Research individual model performance on similar hardware before choosing.

Optimizing Your Self-Hosted LLM

Batching and Token Streaming

Batching requests (processing multiple requests at once) can dramatically improve throughput. Token streaming (sending answers incrementally) leads to faster responses and a better user experience.

Kernel Optimization and Model Parallelism

For larger models, kernel optimization and model parallelism are crucial. These techniques distribute the workload, improving efficiency and performance.

Caching and Pre-heating

Caching frequently used data and pre-heating the model reduces latency and improves response times, particularly for common queries.

Container Image Optimization

Optimizing container images speeds up deployments and reduces resource usage, making the entire process more efficient.

Streaming Model Weights

Streaming model weights is a valuable technique for managing memory, especially with large models, preventing memory bottlenecks.

Step-by-Step Guide to Local LLM Deployment

Setting up the Environment

First, set up your environment. This includes getting a powerful computer with a good GPU to handle the LLM's processing needs.

Building a Knowledge Base with RAG

Create a knowledge base of documents for your LLM to use. This is essential for effective deployment and relevant responses.

Embedding Documents

Embed the documents and user questions into a vector database for efficient retrieval. This transforms text into numerical vectors for similarity searches.

Choosing and Importing a Pre-trained Model

Select and import a pre-trained LLM that meets your needs. This provides a foundation and reduces training time.

Running the Model and Getting Results

After importing, run the model and get results. This involves interacting with the LLM and receiving outputs based on its training and the context you provide.

Helpful Resources and Communities

Online Communities (r/LocalLLaMA)

Online communities like r/LocalLLaMA offer valuable support and insights from others self-hosting LLMs. Sharing experiences and troubleshooting tips can be invaluable.

Tools (LM Studio)

LM Studio is a helpful tool for developing and testing LLM applications, providing a streamlined environment for experimentation.

Informative Content (YouTube Channels)

YouTube channels focused on AI and machine learning offer tutorials and insights into LLMs, including self-hosting, often with practical demonstrations.

Bootstrapping and Cross-Compilation for Self-Hosting

The "Chicken and Egg" Problem

Self-hosting often presents the bootstrapping problem: needing a program to build the program itself. This requires careful planning for a successful setup.

Using Cross-Compilers

Cross-compilers help build applications for different architectures, simplifying self-hosting by allowing development on one system while targeting another.

Building a LLM stack to self-host

Building an LLM stack begins with choosing the right tools. The many options can be overwhelming. Deploying a small test application locally is relatively straightforward, but scaling for production requires expertise and time. Ray offers a comprehensive platform for data processing, training, tuning, and serving ML applications, including LLMs.

OpenLLM, with Yatai, provides a simpler, higher-level abstraction than Ray, suitable for RAG LLM apps using Langchain or Llama-index. This simplicity may reduce flexibility compared to Ray.

A typical RAG LLM app needs a data stack alongside the model serving component. This stack manages data updates, processing, embeddings, and model fine-tuning. The Plural marketplace offers various data stack applications and vector databases like Elasticsearch or Weaviate. Monitoring and telemetry are crucial; a Grafana dashboard for your LLM app can be very helpful.

Proprietary managed services or SaaS solutions are alternatives, but they often require additional domain-specific knowledge. The main overhead of self-hosting is operating and maintaining these platforms on Kubernetes.

Plural to self-host LLMs

With Plural, you can focus on building applications, not managing daily operations. Plural simplifies scaling from local tests to production-ready deployments, handling the complexities of self-hosting platforms like Ray and Yatai on Kubernetes. If you're evaluating ML tooling, try prototyping your architecture with Plural. Schedule a demo to learn more, or sign up for a free open-source account to get started.

Related Articles

- What you need to know about Self-Hosting Large Language Models (LLMs)

- AI-Powered DevOps: A Practical Guide for Platform Teams

- Managed Kubernetes Service: A Comprehensive Guide

- What We Shipped in 2024

- Plural | Secure, self-hosted applications in your cloud

Frequently Asked Questions

Why should I consider self-hosting an LLM instead of using cloud-based services? Self-hosting offers greater control over your data, ensuring privacy and compliance, especially crucial for regulated industries. It can also be more cost-effective in the long run for high-usage applications, eliminating recurring subscription fees. Finally, self-hosting allows for deeper customization and avoids vendor lock-in.

What are some popular open-source tools for self-hosting LLMs, and what are their key features? OpenLLM with Yatai is a good option for deploying and managing LLMs, offering features like serving models via API, LangChain integration, and quantization support. Ray Serve on a Ray cluster provides a scalable model-serving library, framework-agnostic compatibility, and robust monitoring capabilities. Hugging Face TGI is a streamlined solution for running pre-trained Hugging Face models.

What are the hardware and software requirements for self-hosting an LLM? The requirements depend on the size and complexity of the LLM you choose. Larger models demand significant RAM (often exceeding consumer hardware capabilities) and a powerful GPU for optimal performance. Ensure you have sufficient storage and the necessary software dependencies, which can vary depending on the chosen LLM and supporting tools.

How can I optimize the performance of my self-hosted LLM? Several techniques can enhance performance. Batching requests improves throughput, while token streaming provides faster responses. For larger models, kernel optimization and model parallelism are essential. Caching frequently used data, pre-heating the model, optimizing container images, and streaming model weights can further improve efficiency and reduce resource usage.

What is Plural, and how can it help with self-hosting LLMs? Plural simplifies the deployment and management of complex applications on Kubernetes, including self-hosted LLM platforms like Ray and Yatai. It streamlines operations, allowing you to focus on building your LLM applications rather than infrastructure management. Plural also offers a marketplace of data stack applications and vector databases that can integrate seamlessly with your LLM deployment.

Newsletter

Join the newsletter to receive the latest updates in your inbox.