kubectl get events: A Guide to K8s Troubleshooting

Learn how to use `kubectl get events` for Kubernetes troubleshooting. Get practical tips on filtering, analysis, and managing events across clusters.

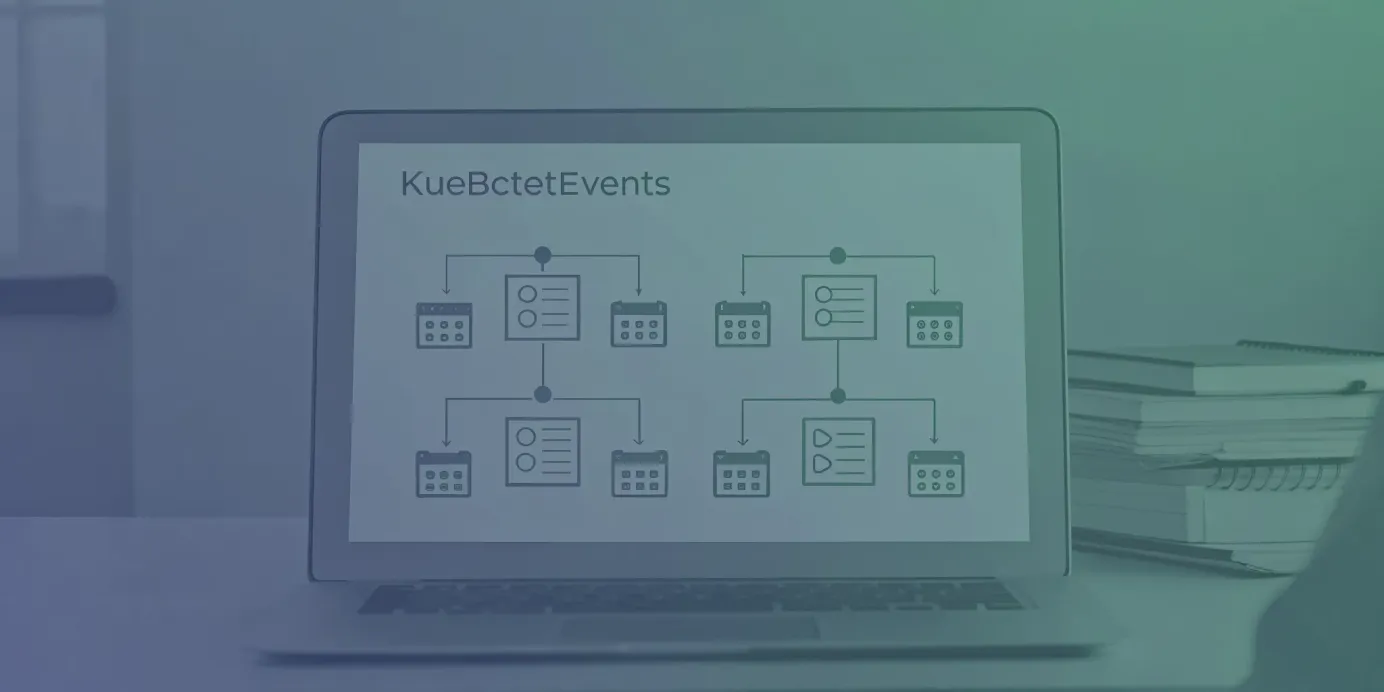

Kubernetes emits a continuous stream of events that describe control plane decisions and object lifecycle transitions. Compared to metrics (time-series aggregates) and logs (unstructured output), events are structured, low-latency signals tied to specific resources, covering scheduling, image pulls, restarts, probe failures, and node conditions. They are invaluable for reconstructing causality during incidents, but they are high-volume and short-lived (typically retained for ~1 hour by the API server).

The primary interface is kubectl get events, which surfaces these signals directly from the API. In practice, raw output is too noisy to be useful under pressure. Effective usage relies on field selectors, sorting, and scoping (namespace, involved object, reason/type) to isolate relevant signals quickly. This guide focuses on precise filtering techniques to debug issues in real time, then outlines patterns for persisting events so they become part of a durable observability pipeline. With the right approach, you can convert transient Kubernetes events into a reliable diagnostic layer alongside logs and metrics, and integrate them into a broader platform workflow with tools like Plural.

Unified Cloud Orchestration for Kubernetes

Manage Kubernetes at scale through a single, enterprise-ready platform.

Key takeaways:

- Treat events as a real-time diagnostic tool: Use

kubectl get eventsto quickly understand why a pod is pending or crashing, but remember its one-hour retention window makes it unsuitable for investigating past incidents. - Filter events to speed up troubleshooting: In busy clusters, use flags like

--field-selectorto isolate specific resources orWarningtypes, helping you pinpoint the root cause of an issue without manual searching. - Adopt a platform for enterprise-scale management: For multi-cluster environments,

kubectlfalls short; a centralized platform like Plural is necessary to aggregate events, provide a single dashboard, and enable long-term storage for complete observability.

What Is kubectl get events?

kubectl get events is a first-line diagnostic command that queries the Kubernetes API for recent event objects and renders them in chronological order. It surfaces control plane activity (scheduling decisions, image pulls, probe failures, restarts, and node conditions) scoped to a namespace or the entire cluster. During incident triage (e.g., a pod stuck in Pending or CrashLoopBackOff), it provides immediate, low-latency context about what the system is doing and why.

Kubernetes events are structured API resources emitted on significant state transitions. Each record includes a timestamp, type (Normal or Warning), reason (e.g., Scheduled, Failed, BackOff), the involved object, and a message. This schema enables fast root-cause isolation without scraping unstructured container logs—for example, distinguishing between scheduling failures, image pull errors (ImagePullBackOff), or liveness probe flaps.

The main constraint is retention. The API server keeps events for a short window (typically ~1 hour), making the command effective for real-time debugging but unsuitable for historical analysis or postmortems. For durable observability, teams should export events to an external store (e.g., via an event router to Elasticsearch/OpenSearch, Loki, or a SIEM) and integrate them with metrics and logs—something platforms like Plural can standardize across clusters.

What Event Types Can You Retrieve?

Kubernetes events are structured API objects that capture state transitions and control plane decisions for cluster resources. They provide a resource-scoped, high-signal view of system behavior—covering scheduling, image pulls, probe evaluations, restarts, and node conditions—without parsing unstructured container logs. These events are emitted by core components like the scheduler, kubelet, and controllers, making them essential for real-time diagnostics.

Normal vs. Warning Events

All events are categorized into two types:

- Normal: Informational signals confirming expected operations. Examples include pod scheduling (

Scheduled), successful image pulls (Pulled), or container starts (Started). These form an execution trace of healthy system behavior. - Warning: Indicators of failure or degraded state. Common reasons include

FailedScheduling,ImagePullBackOff,BackOff, andUnhealthy. In practice, filtering bytype=Warningis the fastest way to isolate issues during incident response.

While this binary classification is coarse, it’s effective when combined with reason and resource scoping to narrow down failure domains quickly.

Event Lifecycle and Retention

Events are ephemeral by design. The Kubernetes API server retains them in etcd for a short TTL (typically ~60 minutes) before garbage collection. This prevents unbounded growth in control plane storage but limits historical visibility.

Operationally, this means:

kubectl get eventsis optimized for active debugging, not retrospective analysis.- Post-incident workflows require exporting events to an external sink before expiry.

Common approaches include deploying an event router (e.g., via Fluent Bit or custom controllers) to ship events into systems like Elasticsearch, Loki, or cloud logging backends. Platforms like Plural can standardize this pipeline across clusters, ensuring events become part of a persistent observability layer.

Severity Context: Reason and Message

The diagnostic value of an event comes from its structured fields:

- Reason: A concise, machine-readable code (e.g.,

Failed,BackOff,Unhealthy,Scheduled). - Message: A detailed, human-readable explanation (e.g., image not found, probe timeout, insufficient CPU).

- Involved Object: The resource (Pod, Node, Deployment) the event pertains to.

For example, a Warning event with reason=Failed and a message indicating an image pull error immediately points to registry/authentication issues, not scheduling or runtime faults.

In practice, effective debugging combines:

- filtering by

type=Warning - grouping by

involvedObject - inspecting

reasonfor classification - reading

messagefor root cause details

This structured approach turns events into a fast, deterministic signal for triaging cluster issues.

How to Troubleshoot Cluster Issues with kubectl get events

kubectl get events exposes a time-ordered stream of control plane activity, making it one of the fastest ways to move from a surface symptom to a concrete root cause. Instead of only observing that a pod is Pending or stuck in CrashLoopBackOff, events reveal why the system reached that state. They capture scheduler decisions, kubelet actions, and controller responses, providing a structured diagnostic layer that sits between high-level status and low-level logs.

While this workflow is straightforward on a single cluster, it becomes harder to operationalize across fleets where event streams are fragmented and short-lived. Centralizing and retaining events is necessary at scale, but the core debugging approach remains the same. Engineers should first learn to isolate and interpret events locally, then extend that workflow using platforms like Plural to aggregate and persist them across environments.

Pod Crash Loops and Restarts

When a pod enters CrashLoopBackOff, events describe the restart lifecycle and often hint at the failure mode. You will typically see repeated BackOff events indicating retry attempts, along with Unhealthy events if liveness or readiness probes are failing. These signals narrow the problem space quickly. A misconfigured probe, such as an incorrect endpoint or overly aggressive timeout, will surface clearly through repeated health check failures. If the container exits immediately after startup, events will show the restart pattern, guiding you to inspect application logs for runtime errors rather than investigating scheduling or infrastructure issues.

Image Pull and Registry Errors

For pods that fail before reaching a running state, image retrieval is a common failure point. Events make this explicit through reasons like ErrImagePull and ImagePullBackOff. The associated message usually contains the exact failure condition, whether it is an invalid image tag, missing repository, authentication failure for a private registry, or network connectivity issues. This structured feedback allows you to focus directly on image configuration and registry access instead of debugging unrelated parts of the system.

Resource Quotas and Scheduling Failures

Pods that remain in Pending are blocked at the scheduling phase, and events provide the scheduler’s decision rationale. A FailedScheduling event includes a message that explains why no node satisfies the pod’s constraints. This could be due to insufficient CPU or memory, mismatched node selectors or affinity rules, unmet tolerations for taints, or namespace-level quota limits. Rather than guessing, you can treat the scheduler’s message as a precise constraint violation and adjust either cluster capacity or pod specifications accordingly.

Node Pressure and Capacity Issues

Some failures originate from node health rather than workload configuration. Events emitted at the node level expose resource pressure conditions such as memory exhaustion, disk shortages, or PID limits. These conditions can lead to pod evictions or prevent new pods from being scheduled. When multiple workloads exhibit instability, correlating their behavior with node pressure events helps distinguish between application-level issues and infrastructure constraints. Addressing these signals typically involves scaling resources, redistributing workloads, or tuning resource requests and limits to stabilize cluster behavior.

Using kubectl get events: Syntax and Filtering

kubectl get events is most useful when you treat it as a query interface over structured control plane signals rather than a raw feed. The default output is a flat, time-sorted table that quickly becomes noisy in active clusters. Effective troubleshooting depends on scoping, filtering, and reshaping this data so you can isolate causality with minimal cognitive overhead.

At a high level, your workflow should move from broad inspection to tightly scoped queries. Start with namespace or cluster-level visibility, then progressively constrain by object, type, or reason. Once you’ve identified the signal, switch to structured output formats if you need deeper inspection or automation. This pattern scales from single-cluster debugging to fleet-wide observability pipelines, where platforms like Plural can standardize event ingestion and querying.

Basic Syntax and Namespace Targeting

Running kubectl get events without flags queries the current namespace context. This is sufficient for localized debugging but often misleading if the issue spans multiple workloads or namespaces. Use -n <namespace> to scope explicitly, or --all-namespaces when the failure domain is unclear. The latter is particularly useful for identifying systemic issues such as node failures or cluster-wide scheduling constraints, where relevant events may be distributed across namespaces.

Filtering with Field Selectors

In non-trivial clusters, unfiltered events are effectively unusable. The --field-selector flag provides server-side filtering, which is critical for performance and clarity. Filtering by type=Warning is the fastest way to isolate failure signals, while involvedObject.name=<resource> scopes events to a specific pod or resource under investigation.

You can combine selectors to reduce noise further. For example, filtering by both object name and event type allows you to focus exclusively on failure modes for a single workload. This turns events into a precise diagnostic stream rather than a general activity log.

Formatting Output: JSON, YAML, and Custom Views

The default tabular output is optimized for quick inspection but lacks depth. For detailed analysis or automation, use structured formats like -o json or -o yaml, which expose the full event schema. This is particularly useful when integrating with scripts or external tooling.

For interactive use, -o custom-columns provides a middle ground by letting you define a concise, high-signal view. Selecting fields such as timestamp, reason, involved object, and message reduces visual clutter and surfaces only what matters during debugging. This approach is especially effective when iterating quickly on failures.

Real-Time Monitoring with --watch

Static snapshots are often insufficient during deployments or incident response. The --watch flag converts the command into a live stream, pushing new events as they are created. This is essential for understanding temporal sequences, such as the order of scheduling, image pulling, container startup, and probe evaluation.

In practice, --watch is most valuable when paired with scoped filters. Streaming all cluster events is rarely useful, but watching a single pod or namespace provides immediate feedback on system behavior. This allows you to validate fixes in real time and capture transient errors that may not persist long enough to appear in a later query.

The Limitations of kubectl get events

While kubectl get events is a fundamental command for real-time troubleshooting, relying on it exclusively can lead to significant blind spots, especially in production environments. Its design prioritizes immediate feedback over long-term analysis, which introduces several limitations that can slow down incident response and root cause analysis. Understanding these constraints is the first step toward building a more robust observability strategy for your Kubernetes fleet.

The 60-Minute Retention Limit

The most significant limitation is the ephemeral nature of Kubernetes events. By default, events are only retained for one hour. This short retention window means that if an issue occurred overnight or even just a few hours ago, the relevant event data is likely gone by the time an engineer starts investigating. This makes it nearly impossible to diagnose past incidents or identify patterns in intermittent failures that occur outside this brief timeframe. Without a mechanism to store events long-term, teams lose critical historical context that is essential for comprehensive troubleshooting and post-mortems.

Managing High-Volume Output in Large Clusters

In any large-scale Kubernetes cluster, the stream of events can quickly become a flood of information. A single deployment can generate hundreds of events, and in a multi-tenant environment, the output of kubectl get events can be overwhelming. While you can use field selectors to filter the noise, such as showing only non-normal events, this adds complexity to your queries. Sifting through this high-volume output to find the signal is a time-consuming manual process that is prone to error, making it difficult to quickly pinpoint the root cause of an issue amidst the chatter of routine operations.

Lacking Context for Root Cause Analysis

Events provide clues, but they rarely tell the whole story. An event like FailedScheduling indicates a problem but doesn't explain why the scheduler failed. Was it due to insufficient resources, node taints, or a misconfigured affinity rule? To find the answer, you need to correlate the event with other data sources like pod logs, node metrics, and resource configurations. Because Kubernetes events are not stored in a conventional log format, they are difficult to integrate into a broader analysis without specialized tools. This lack of context forces engineers to manually piece together disparate information, slowing down the investigation.

Correlating Events Across Namespaces

Modern applications are often distributed across multiple namespaces, but kubectl get events operates within the confines of these boundaries. While you can request events across all namespaces using the -A flag, the command doesn't inherently correlate related activities. For example, a failure in a database pod in the data-services namespace might cause cascading failures in application pods in the app-services namespace. Manually tracing this chain of events across different outputs is challenging and inefficient. This siloed view makes it difficult to understand the full blast radius of an issue and how different components are interacting within the cluster.

Advanced Techniques and Best Practices

Mastering kubectl get events involves more than just running the basic command. To troubleshoot effectively, especially in complex environments, you need to combine it with other tools and techniques. By refining your approach, you can move from simply viewing events to actively analyzing them, allowing you to pinpoint the root cause of an issue with greater speed and precision. These advanced practices help you filter out noise, correlate information from different sources, and recognize patterns that signal underlying problems in your cluster.

Combine events with describe and logs

Events provide the "what," but to understand the "why," you need more context. The most effective troubleshooting workflow combines events with describe and logs. While kubectl get events gives you a cluster-wide timeline, kubectl describe offers a focused view. When you run kubectl describe on a specific resource, like a pod or deployment, it includes a section listing recent events related to only that object. This is often the best next step after you spot a warning in the main event log.

Once describe helps you narrow your focus to a specific pod, kubectl logs provides the application-level details. For instance, a CrashLoopBackOff event tells you a pod is failing, but the pod’s logs will show the actual application error, like a database connection failure or a configuration error, that is causing the crash. This three-step process, moving from events to describe to logs, creates a powerful diagnostic path from a high-level cluster event down to a specific line of code.

Filter by Involved Object and Event Reason

In a busy cluster, the output of kubectl get events can be overwhelming. The key to managing this is effective filtering. Instead of manually searching through hundreds of lines, you can use the --field-selector flag to isolate exactly what you need. This allows you to filter events based on specific resource attributes.

For example, to see events for a single pod, you can run: kubectl get events --field-selector involvedObject.kind=Pod,involvedObject.name=my-pod-xyz

This command instantly cuts through the noise and shows only the events affecting my-pod-xyz. You can also filter by the event reason, such as FailedScheduling or Unhealthy, to find all instances of a particular problem across a namespace. Combining these selectors helps you quickly target specific issues, making your troubleshooting process much more efficient.

Use Custom Columns for Targeted Analysis

The default columns in kubectl get events are useful, but they don't always present information in the most efficient way for every scenario. To create a more targeted view, you can format the output with the -o custom-columns flag. This feature lets you specify exactly which fields you want to see and assign your own column headers.

For instance, if you only care about the event reason, the affected object, and the message, you could use a command like this: kubectl get events -o custom-columns=REASON:.reason,OBJECT:.involvedObject.name,MESSAGE:.message

This produces a clean, table-like output that is much easier to scan for critical information. Custom columns are especially useful when you are scripting automated checks or when you need to share a concise summary of recent events with your team. It helps you focus on the data that matters most for the problem at hand.

Identify Event Patterns for Faster Diagnosis

As you become more familiar with your cluster, you’ll start to recognize recurring sequences of events that signal specific problems. Identifying these patterns is a powerful technique for accelerating diagnosis. Events document the lifecycle of Kubernetes objects, showing the steps from creation to running state. A deviation from the normal pattern is often the first sign of trouble.

For example, a FailedScheduling event for a pod might be followed by a NodeNotReady event, indicating the issue isn't with the pod itself but with the health of your nodes. Similarly, a series of Failed liveness probe events followed by a BackOff event points directly to an application health issue within a container. By learning to recognize these event chains, you can move beyond fixing individual symptoms and start addressing the root cause of recurring failures in your system.

How Do You Manage Events at Enterprise Scale?

While kubectl get events is an essential command for real-time diagnostics, its utility diminishes in large, multi-cluster environments. Managing events across a fleet of Kubernetes clusters introduces challenges related to data retention, centralization, and analysis. At enterprise scale, you need a systematic approach to transform event data from a transient stream into a reliable source for observability, auditing, and automated response. This requires moving beyond manual command-line checks and adopting tools and practices built for complexity and scale.

Storing Events for Long-Term Retention

By default, Kubernetes retains events for only 60 minutes. This short lifecycle makes them unsuitable for historical analysis, compliance audits, or post-incident forensics. To overcome this, you must export events to a durable, long-term storage solution. A common strategy involves deploying an event exporter tool within your cluster. These tools watch the Kubernetes API for new events and forward them to an external system like Elasticsearch, Loki, or a cloud storage bucket. The Kubernetes event exporter is a popular open-source option that can route events to various destinations. This ensures you have a complete historical record, allowing you to analyze trends and investigate past incidents long after the original events have expired from the cluster.

Centralizing Monitoring Across Clusters

Running kubectl get events against individual clusters is inefficient and doesn't scale when you're managing a fleet. Platform and DevOps teams need a centralized view to monitor the health of all clusters without juggling multiple terminals and kubeconfig files. A unified control plane provides this single pane of glass, aggregating events from every environment into one searchable interface. Plural’s embedded Kubernetes dashboard offers this capability, allowing you to securely access and filter events from any managed cluster. This centralized approach simplifies troubleshooting by providing a holistic view of your entire infrastructure, making it easier to spot systemic issues that affect multiple clusters simultaneously.

Automating Event Analysis and Alerting

Manually monitoring event streams is a reactive process that can delay responses to critical issues. A more effective strategy is to automate event analysis and configure proactive alerting. Tools like Kubewatch can monitor a cluster’s event stream and trigger notifications in Slack, PagerDuty, or other communication channels when specific patterns are detected. For example, you can create alerts for critical events like FailedScheduling, ImagePullBackOff, or persistent CrashLoopBackOff warnings. This automation transforms events into actionable signals, enabling your team to address problems as they happen instead of waiting for them to cause a service disruption. This proactive stance is crucial for maintaining high availability in production environments.

Integrating with Observability Platforms

Events provide the "why" behind a cluster's state changes, but they are most powerful when correlated with other observability data like metrics, logs, and traces. For instance, a FailedScheduling event due to insufficient CPU is more informative when viewed alongside a Grafana dashboard showing node resource utilization. Integrating event data into a comprehensive observability platform allows you to connect the dots between an event and its root cause. By sending events to systems like Prometheus or Grafana Loki, you can build dashboards that overlay event markers on metric charts or link directly from an event to the relevant pod logs, dramatically speeding up root cause analysis.

Streamline Event Management with Plural

While kubectl get events is a fundamental tool for troubleshooting, its effectiveness diminishes as your Kubernetes fleet grows. Manually polling events across dozens or hundreds of clusters is not a scalable strategy. It leads to missed signals, slow response times, and an incomplete understanding of your system's health. Plural addresses these limitations by providing a centralized platform for managing Kubernetes events at an enterprise scale.

Get Fleet-Wide Visibility in a Unified Dashboard

Kubernetes events are records of state changes and errors within a cluster, such as a pod being scheduled or failing to start. While kubectl lets you view these events, managing them across a large fleet is a different story. Constantly switching contexts to poll individual clusters is inefficient and makes it impossible to see the bigger picture.

Plural provides a unified dashboard that consolidates events from every cluster in your fleet into a single pane of glass. This eliminates the need to juggle kubeconfigs and provides an immediate, fleet-wide view of cluster health, helping your team spot systemic issues before they escalate.

Aggregate and Filter Events at Scale

With kubectl, you can use flags like --field-selector to filter events by type, which is useful for isolating warnings or errors on a single cluster. However, this manual approach doesn't scale when you need to investigate an issue across multiple environments. You can't easily search for a specific event pattern across your entire production and staging fleet.

Plural aggregates events from all managed clusters, allowing you to perform powerful, fleet-wide searches and filtering from a central UI. You can instantly isolate all ImagePullBackOff errors or track a specific deployment's events across every cluster it targets. This centralized approach turns event data from noise into an actionable signal for troubleshooting.

Access Events Securely Across Environments

Events are stored as objects in the apiserver, which means accessing them requires direct API access to the cluster. For clusters in private networks or on-premises data centers, this often involves cumbersome VPNs, bastion hosts, or complex network configurations that can introduce security risks.

Plural’s agent-based architecture solves this problem by establishing a secure, egress-only connection from each managed cluster to the control plane. This allows you to access events and interact with the Kubernetes API through the Plural dashboard without ever exposing your clusters to inbound traffic. You get full visibility into every environment while maintaining a strict security posture.

Automate Correlation and Intelligent Alerting

Watching events helps you find and fix problems, but manually monitoring a high-volume event stream is impractical. The real challenge is correlating events with other telemetry data, like logs and metrics, to understand the root cause of an issue. kubectl alone provides the event data but lacks the context needed for deep analysis.

Plural provides the foundation for building automated workflows around event data. By centralizing events, you can more easily integrate them with observability platforms like Prometheus or Grafana. This enables you to create intelligent alerts that trigger on specific event patterns, correlate events with performance metrics, and reduce the mean time to resolution for critical incidents.

Related Articles

Unified Cloud Orchestration for Kubernetes

Manage Kubernetes at scale through a single, enterprise-ready platform.

Frequently Asked Questions

What's the real difference between Kubernetes events and container logs? Kubernetes events and container logs provide two different views of your system. Events are structured messages from the Kubernetes control plane about the lifecycle of resources like pods, nodes, and deployments. Think of them as the cluster's administrative log, telling you when a pod was scheduled or why it failed to start. Container logs, on the other hand, are the unstructured output (stdout and stderr) from the application running inside your container. Events tell you a pod is in a CrashLoopBackOff state; logs tell you the specific application error that caused the crash.

Why are Kubernetes events deleted after only one hour? The short one-hour retention period for events is a deliberate design choice to protect the performance and stability of the cluster's etcd datastore. Since etcd is the central database for all cluster state, overloading it with a long history of event data could slow down the entire Kubernetes API. This default behavior makes events useful for real-time debugging but requires an external solution for long-term storage, historical analysis, or compliance auditing.

My pod is stuck in CrashLoopBackOff. What's the most effective way to diagnose it using events? When a pod is in a crash loop, start by isolating events related to that specific pod using a field selector. This will show you a history of Warning events, often with a BackOff reason, indicating repeated restart attempts. Next, use kubectl describe pod <pod-name> to see a filtered list of these events along with other configuration details. Finally, check the application's output with kubectl logs <pod-name> to find the specific error message causing the container to exit. This three-step process efficiently moves from the cluster-level symptom to the application-level root cause.

The output of kubectl get events is too noisy. How can I find the specific information I need? In a busy cluster, the raw event stream can be overwhelming. The most effective way to manage this is by using the --field-selector flag to filter the output. You can narrow the results to only show Warning types or focus on events related to a specific object, like a failing pod or deployment. For a cleaner view, you can also format the output with -o custom-columns to display only the most relevant fields, such as the event reason, the affected object, and the message.

How does a platform like Plural help with event management beyond what kubectl can do? While kubectl is essential for single-cluster diagnostics, it doesn't scale for managing a fleet. Plural centralizes event management by aggregating events from all your clusters into a single, searchable dashboard. This allows you to retain event data long-term for historical analysis and provides a fleet-wide view to identify systemic issues. Plural's secure, agent-based architecture also lets you access events from private or on-prem clusters without complex network configurations, simplifying troubleshooting and improving your overall security posture.

Newsletter

Join the newsletter to receive the latest updates in your inbox.